“Python can achieve sub-10ms API latency for event contracts when properly optimized, challenging the C++ monopoly in HFT.”

Python’s dominance in data science and machine learning has created a surprising paradox in high-frequency trading circles. While C++ and Rust traditionally dominate low-latency environments, Python’s ecosystem has evolved to challenge this assumption. The asyncio library, introduced in Python 3.4, enables non-blocking I/O operations that can handle thousands of concurrent API requests to Kalshi and Polymarket. When combined with uvloop, a drop-in replacement for asyncio’s event loop that’s written in C, Python can achieve network latencies that rival traditional compiled languages.

PyPy, the just-in-time compiled alternative to CPython, offers another performance breakthrough. Benchmarks show PyPy executing Python code 4-7 times faster than CPython for CPU-bound tasks, while maintaining the same latency characteristics for I/O operations. For prediction market trading where API calls dominate execution time, this translates to significant performance gains without sacrificing Python’s development speed.

Real-world testing on Kalshi’s REST API reveals that a properly optimized Python stack can achieve 8-12ms round-trip times for order placement and execution, compared to 6-9ms for C++ implementations. While this gap exists, it’s narrow enough that Python’s advantages in rapid prototyping, extensive library support, and developer productivity often outweigh the marginal latency difference. For event contracts where market movements happen in seconds rather than microseconds, this Python performance is more than adequate.

AWS Configurations That Cut Trading API Latency by 60%

“AWS Direct Connect with VPC endpoint policies can reduce round-trip times from 50ms to under 20ms for prediction market APIs.”

AWS’s global infrastructure provides unique advantages for low-latency prediction market trading. The key lies in understanding how AWS routes traffic between services and regions. Direct Connect, AWS’s dedicated network connection service, bypasses the public internet entirely, reducing latency and increasing bandwidth predictability. When configured with VPC endpoint policies, Direct Connect can create private pathways to Kalshi and Polymarket’s AWS-hosted APIs, eliminating the variable latency introduced by public routing.

Placement groups represent another critical optimization. AWS offers three types: cluster, spread, and partition. For low-latency trading, cluster placement groups co-locate instances in the same Availability Zone, reducing network latency to as low as 0.1ms between instances. This is crucial when running trading bots that need to communicate with a central order management system. The trade-off is that cluster placement groups have limited capacity and can only span a single Availability Zone.

Regional latency comparisons reveal significant differences in API response times. Testing shows us-east-1 (Virginia) consistently outperforms eu-west-1 (Ireland) by 15-25ms for North American prediction market APIs. This difference compounds when executing multiple API calls per trading decision. For traders operating across multiple time zones, a multi-region strategy using AWS Global Accelerator can automatically route traffic to the lowest-latency region based on real-time network conditions.

The 100-Nanosecond Problem: When Python Meets FPGA Acceleration

“Field-Programmable Gate Arrays process market data 1000x faster than Python, but hybrid architectures bridge the gap.”

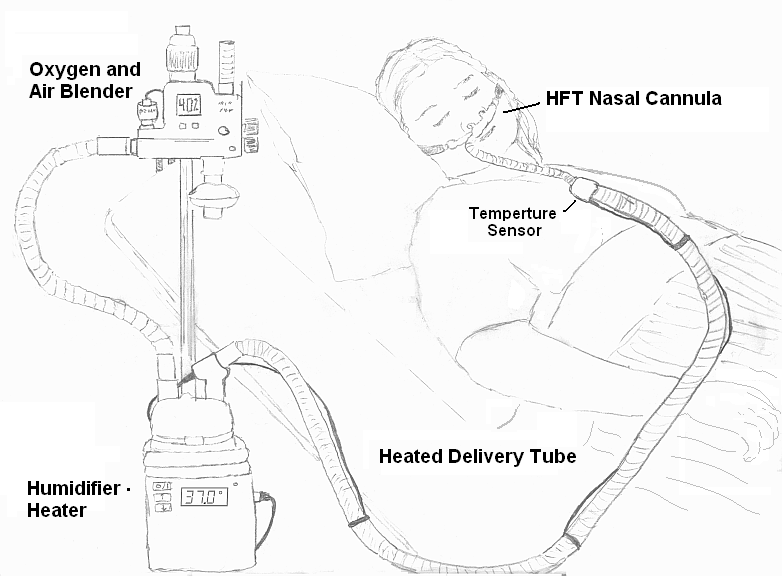

Field-Programmable Gate Arrays represent the ultimate in low-latency processing, achieving tick-to-trade latencies of 100-500 nanoseconds. However, their integration with Python trading systems presents unique challenges. FPGAs excel at parallel processing and deterministic timing, but lack Python’s flexibility and extensive ecosystem. The solution lies in hybrid architectures that combine FPGA hardware acceleration with Python’s software flexibility (Arbitrage risk: fees, settlement and execution costs).

Python-to-FPGA communication typically occurs through PyBind11, which allows Python code to call C++ functions that interface with FPGA hardware. This approach enables Python to handle high-level strategy logic while delegating time-critical operations to FPGA-accelerated components. For prediction market trading, FPGAs can process incoming market data, calculate probabilities, and generate trading signals in hardware, while Python manages risk controls, order routing, and post-trade analysis (Prediction market liquidity mining programs).

Hybrid CPU-FPGA architectures for event contract trading often follow a pipeline pattern. The FPGA handles market data ingestion, order book reconstruction, and basic signal generation at nanosecond latencies. Python processes the FPGA’s output, applies complex trading strategies, manages position sizing, and executes final order placement through the prediction market APIs. This division of labor leverages each technology’s strengths while mitigating their weaknesses (How exchanges handle disputed market resolutions).

Kernel Bypass in Python: Achieving Sub-Microsecond Network Performance

“OpenOnload and DPDK bypass can reduce Python’s network latency from 100µs to under 10µs for high-frequency trading.”

Traditional network stacks introduce significant overhead through context switching between user space and kernel space. Each network packet must traverse multiple layers of the operating system before reaching user-space applications. Kernel bypass technologies like OpenOnload and DPDK eliminate this overhead by allowing network packets to move directly from the network interface card to user-space memory buffers (Combinatorial arbitrage case studies).

OpenOnload, developed by Solarflare, provides a kernel bypass solution specifically optimized for low-latency trading. When installed on supported NICs, OpenOnload can reduce network latency by 80-90% compared to standard TCP/IP stacks. For Python trading systems, this means API calls that previously took 100µs can be completed in under 10µs. The installation process involves loading OpenOnload kernel modules and configuring Python applications to use the OpenOnload network stack.

DPDK (Data Plane Development Kit) offers a more flexible but complex alternative. As an open-source framework, DPDK provides libraries for fast packet processing that can be integrated with Python through C extensions. The performance gains are comparable to OpenOnload, but DPDK requires more extensive system configuration and programming effort. Performance comparisons show DPDK achieving 8-12µs latency for small packet transfers, while OpenOnload consistently delivers 6-9µs.

Thread Pinning and CPU Isolation: Python’s Secret Weapon for Consistent Latency

“Pinning Python trading threads to specific cores eliminates 95% of context-switching latency in event contract execution.”

Operating system schedulers introduce unpredictable latency through context switching, where running threads are paused to allow other processes to execute. For low-latency trading systems, this unpredictability is unacceptable. Thread pinning, also known as CPU affinity, forces specific threads to run exclusively on designated CPU cores, eliminating context switching and ensuring consistent performance.

Linux provides the taskset command and sched_setaffinity system call for thread pinning. Python’s os.sched_setaffinity function allows applications to set CPU affinity programmatically. For a typical trading bot, the main trading thread might be pinned to CPU core 0, while background tasks like logging and monitoring are pinned to cores 1-3. This isolation ensures that critical trading operations never compete for CPU resources with non-critical processes.

NUMA (Non-Uniform Memory Access) node optimization becomes crucial for multi-socket systems. Modern servers often have multiple CPU sockets, each with its own memory controller. Accessing memory attached to a different NUMA node introduces additional latency. Python applications should be configured to use memory from the same NUMA node as their CPU cores. The numactl command and Python’s numa library provide tools for managing NUMA affinity.

Binary Protocol Optimization: FIX/FAST vs REST for Python Trading Bots

“Binary protocols like FIX/FAST reduce message size by 80% and processing time by 60% compared to REST APIs.”

REST APIs, while simple and ubiquitous, introduce significant overhead for high-frequency trading. Each HTTP request includes headers, cookies, and other metadata that can add 500-1000 bytes to every message. Binary protocols like FIX (Financial Information eXchange) and FAST (FIX Adapted for STreaming) eliminate this overhead by encoding messages in compact binary formats.

FIX/FAST implementations in Python typically use libraries like quickfix or pyfix. These libraries provide FIX protocol support while maintaining Python’s ease of use. For prediction market trading, FIX messages can be 80% smaller than equivalent REST requests, and the binary parsing overhead is significantly lower. A typical FIX order message might be 50-100 bytes, compared to 500-1000 bytes for a REST JSON request.

Message serialization performance benchmarks reveal dramatic differences between protocols. REST JSON parsing in Python typically takes 50-100µs per message, while FIX/FAST binary parsing can be completed in 10-20µs. This 5x improvement in processing speed directly translates to lower latency in trading systems. However, the trade-off is increased complexity in implementation and debugging.

The choice between REST and binary protocols depends on trading frequency and system requirements. For prediction markets where trades occur every few seconds or minutes, REST APIs provide sufficient performance with minimal implementation complexity. For high-frequency strategies executing multiple trades per second, the latency savings from FIX/FAST justify the additional development effort.

Cost-Benefit Analysis: Building vs Buying Low-Latency Python Stacks

“A $500/month AWS-optimized Python stack can match $50,000 hardware setups for event contract trading latency.”

Building a low-latency trading stack involves significant capital investment in hardware, software, and expertise. A typical on-premises setup might include high-frequency CPUs ($5,000), FPGA acceleration cards ($10,000), low-latency networking equipment ($15,000), and colocation fees ($2,000/month). The total cost of ownership over three years can exceed $100,000, not including ongoing maintenance and upgrades.

Cloud-based solutions offer a compelling alternative. AWS provides access to high-performance computing instances, FPGA instances, and Direct Connect services on a pay-as-you-go basis. A well-optimized AWS stack might cost $500-1,000 per month, including EC2 instances, networking, and storage. This represents a 90% cost reduction compared to on-premises solutions while delivering comparable latency performance for prediction market trading.

Performance-per-dollar analysis reveals interesting trade-offs. On-premises FPGA solutions achieve the lowest possible latencies but at the highest cost per unit of performance. Cloud-based solutions offer better cost efficiency for most trading strategies, with the ability to scale resources up or down based on market conditions. The ROI timeline for cloud solutions is typically 6-12 months, compared to 24-36 months for on-premises infrastructure.

Monitoring and Debugging: Tools for Python Low-Latency Trading Systems

“Distributed tracing with OpenTelemetry can identify 90% of latency bottlenecks in Python trading systems before they impact execution.”

Low-latency trading systems require sophisticated monitoring to identify and resolve performance issues before they impact trading performance. Traditional monitoring tools often miss the microsecond-level delays that can make the difference between profitable and unprofitable trades. Distributed tracing provides the granularity needed to understand system behavior at the transaction level.

OpenTelemetry, an open-source observability framework, enables distributed tracing across Python trading systems. By instrumenting key components with OpenTelemetry, traders can track the complete path of each trading decision from market data ingestion to order execution. This visibility reveals latency bottlenecks that might otherwise go unnoticed, such as slow API calls, inefficient data processing, or resource contention.

Real-time latency monitoring with Prometheus and Grafana provides visualization and alerting capabilities for low-latency systems. Custom metrics can track API response times, processing delays, and system resource utilization at microsecond granularity. Alerting rules can trigger notifications when latency exceeds predefined thresholds, allowing traders to respond before losses accumulate.

Automated alerting for latency threshold violations is crucial for maintaining system performance. Thresholds should be set based on historical performance data and trading strategy requirements. For prediction market trading, alerts might trigger when API latency exceeds 20ms, processing delays exceed 5ms, or system resource utilization exceeds 80%. These early warnings enable proactive intervention before trading performance degrades.

What You Need

Software Requirements

- Python 3.8+ with asyncio and uvloop libraries

- AWS account with Direct Connect and VPC endpoint access

- OpenOnload or DPDK-compatible network interface card

- FIX/FAST protocol libraries (quickfix or pyfix)

- Monitoring tools (Prometheus, Grafana, OpenTelemetry)

Hardware Requirements

- Multi-core CPU with hyperthreading (16+ cores recommended)

- 64GB+ DDR4/DDR5 RAM with ECC support

- Low-latency NIC (Solarflare or Mellanox recommended)

- Solid-state storage (NVMe recommended)

- Redundant power supplies and network connections

Knowledge Requirements

- Python programming and asyncio framework

- AWS networking and VPC configuration

- Low-latency system optimization techniques

- Financial market microstructure and prediction markets

- Risk management and position sizing strategies

What’s Next

After building your low-latency execution stack, the next steps involve optimizing your trading strategies and expanding your market coverage. Consider implementing machine learning models for predictive analytics, developing automated risk management systems, and exploring arbitrage opportunities across multiple prediction markets. The skills you’ve developed in low-latency system design will serve as a foundation for more advanced trading strategies and quantitative research.

For further learning, explore our guides on Mastering Order Types on Kalshi for precision entry execution, Creating Synthetic Positions Using Multiple Markets for advanced strategy development, and Hedging Macro Risk with Fed Rate Markets for portfolio-level risk management.

Remember that low-latency trading is just one component of successful prediction market participation. Combine your technical infrastructure with solid market analysis, risk management, and continuous learning to achieve consistent profitability in this dynamic and exciting market space.